信息网络安全 ›› 2026, Vol. 26 ›› Issue (2): 236-250.doi: 10.3969/j.issn.1671-1122.2026.02.005

面向分布式学习的多密钥同态加密与差分隐私融合方案

西安邮电大学网络空间安全学院 西安 710121

-

收稿日期:2025-04-15出版日期:2026-02-10发布日期:2026-02-23 -

通讯作者:王腾 wangteng@xupt.edu.cn -

作者简介:王腾(1995—),女,陕西,副教授,博士,主要研究方向为数据安全与隐私保护|樊坤渭(2000—),男,陕西,硕士研究生,主要研究方向为机器学习、隐私保护|张瑶(2001—),女,陕西,硕士研究生,主要研究方向为实时数据流隐私保护 -

基金资助:国家自然科学基金(62102311);陕西省科学技术协会青年人才托举计划(20240116);陕西省重点研发计划(2025CY-YBXM-069)

A Fusion Scheme of Multi-Key Homomorphic Encryption and Differential Privacy for Distributed Learning

WANG Teng( ), FAN Kunwei, ZHANG Yao

), FAN Kunwei, ZHANG Yao

School of Cyberspace Security ,Xi’an University of Posts and Telecommunications Xi’an 710121, China

-

Received:2025-04-15Online:2026-02-10Published:2026-02-23

摘要:

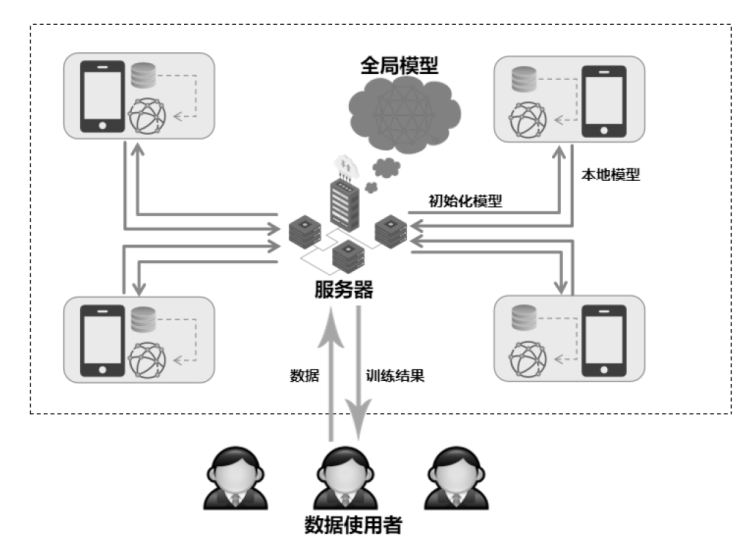

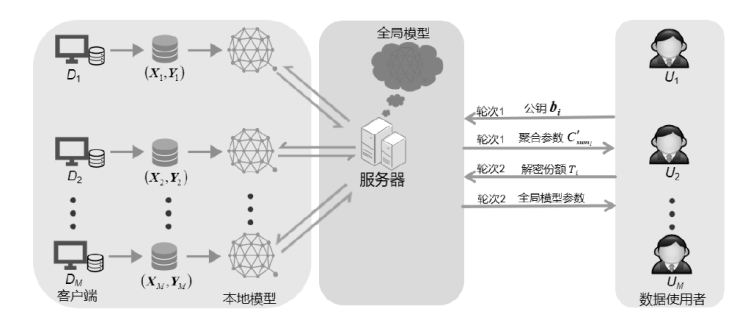

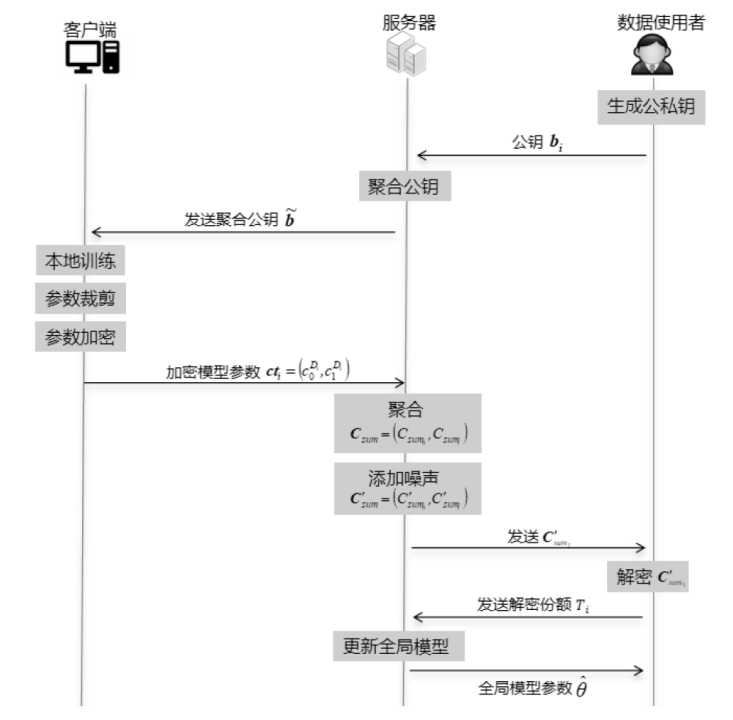

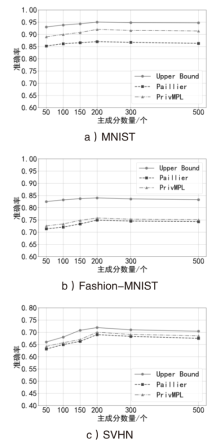

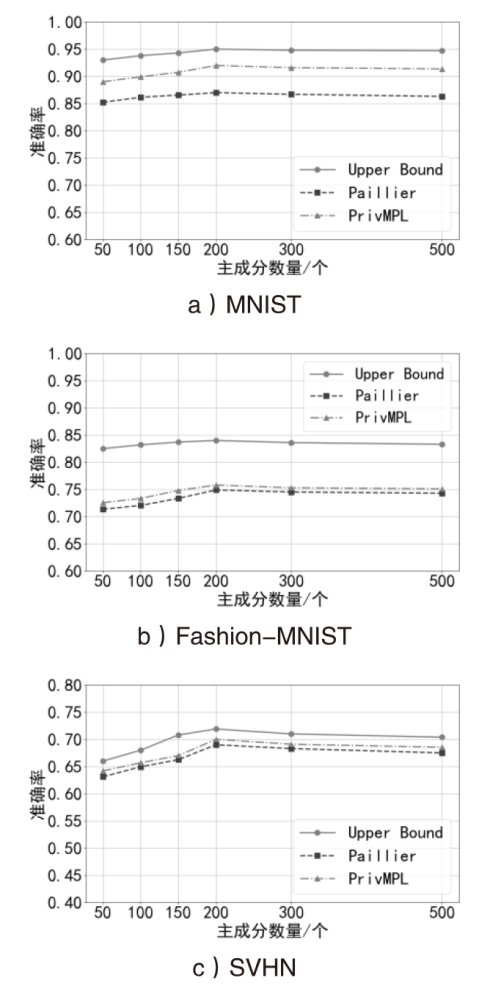

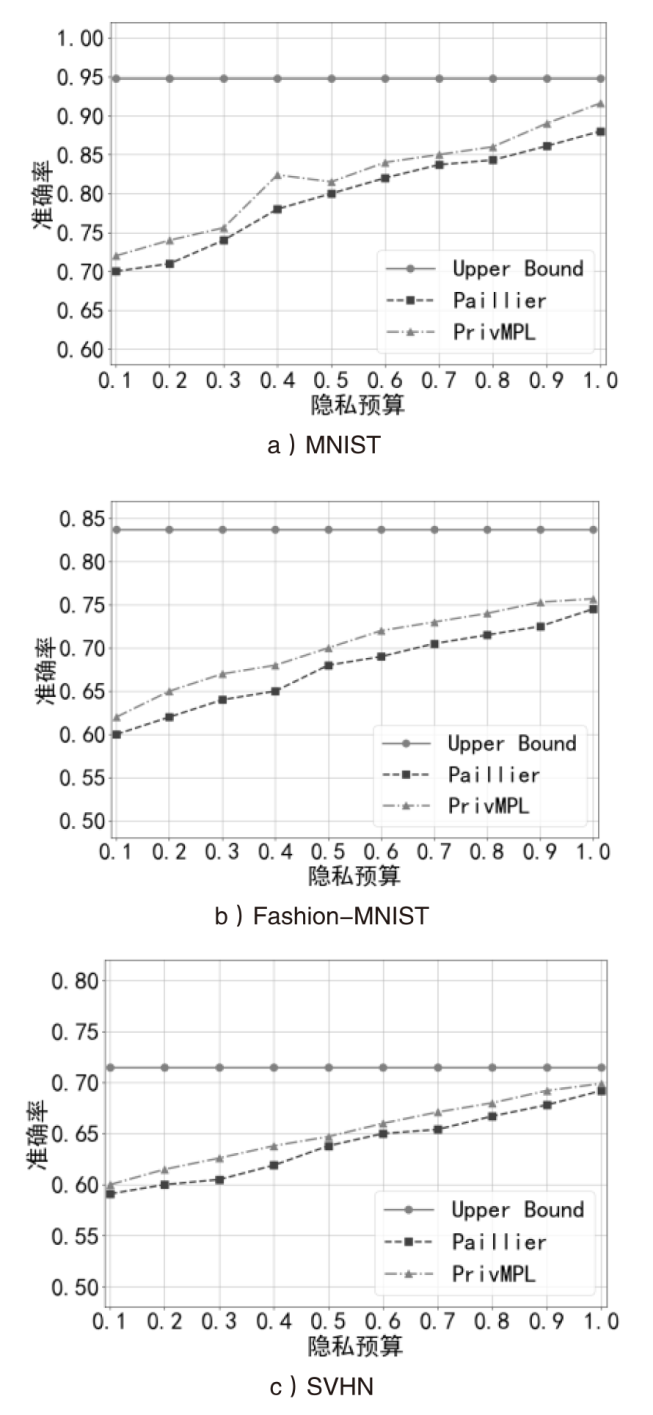

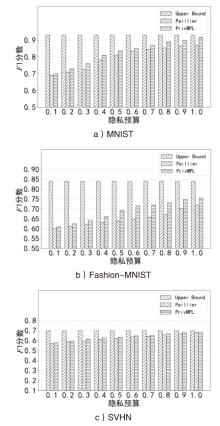

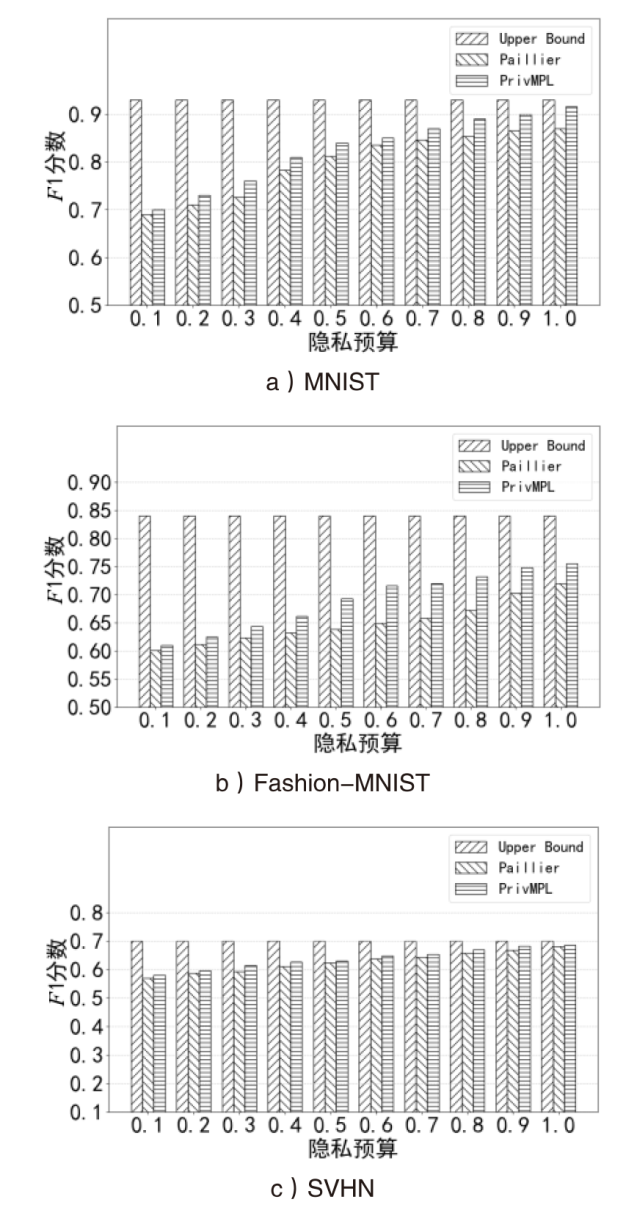

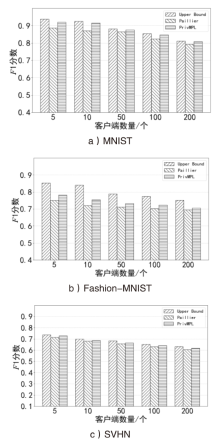

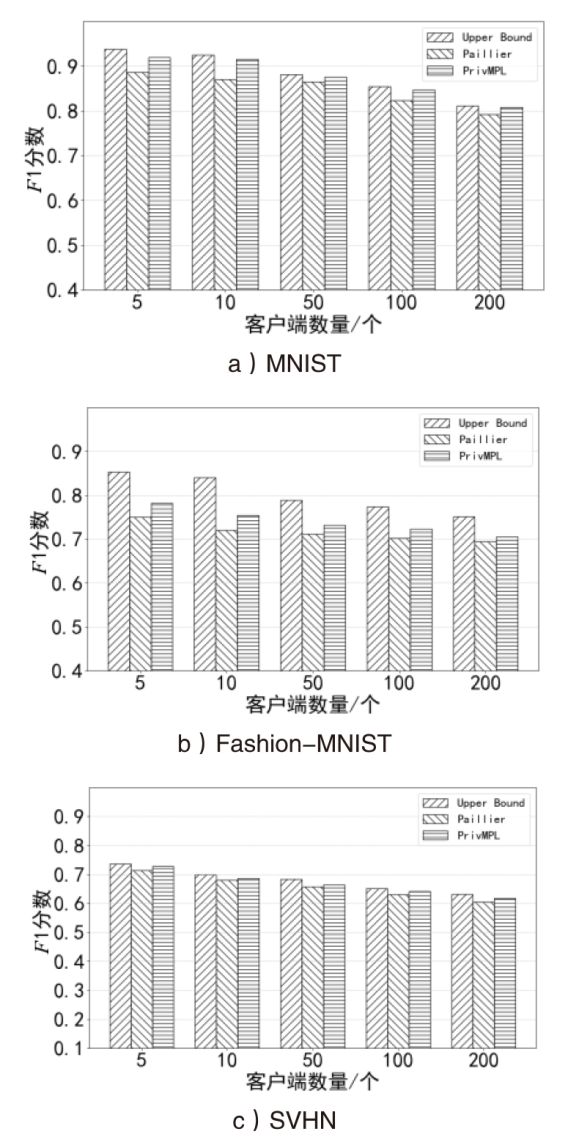

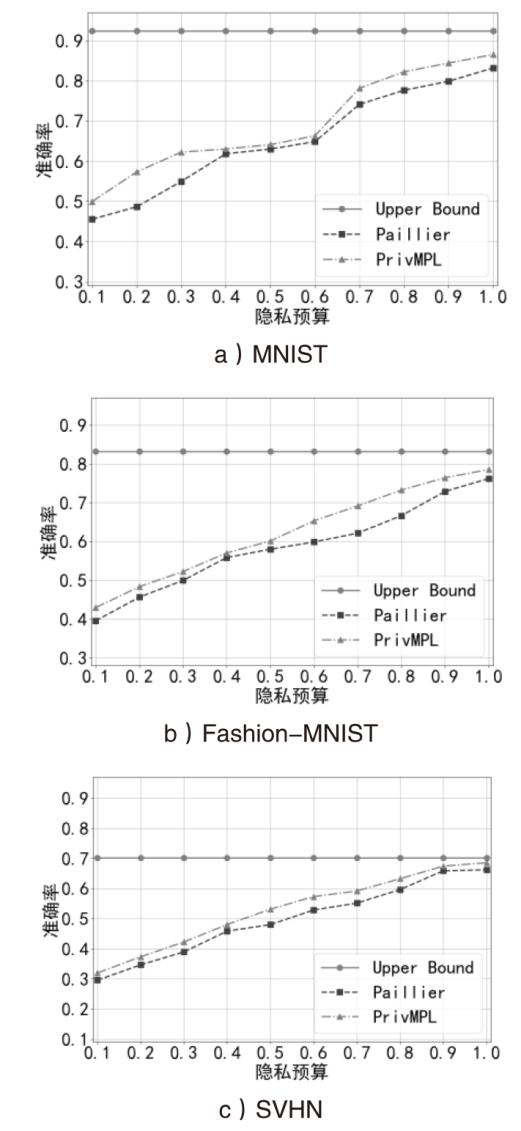

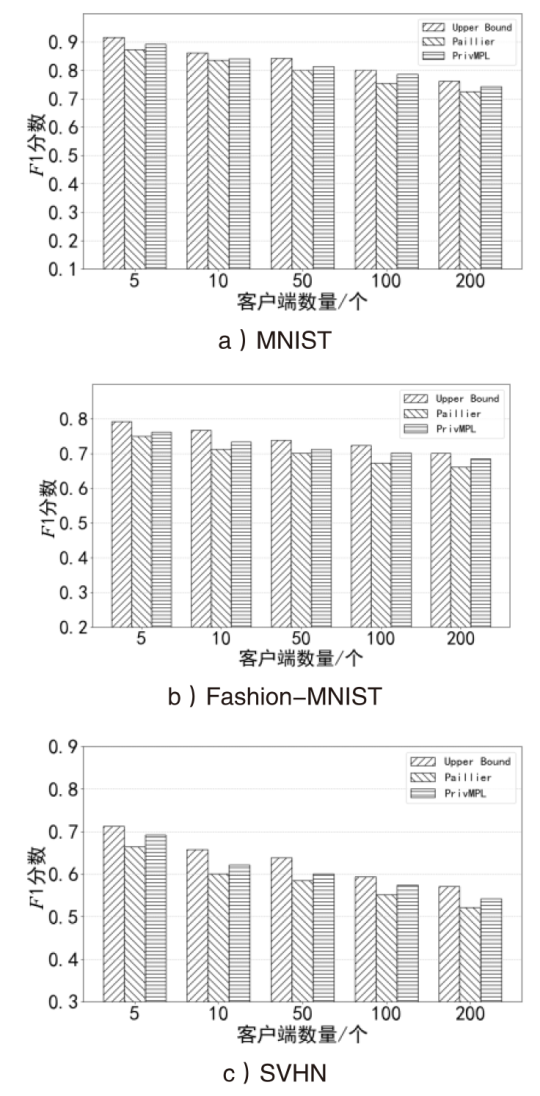

在大数据时代,机器学习领域的数据隐私保护愈发重要。在多方学习场景中,攻击者能够根据梯度和模型参数等信息,反向推导出原始数据特征。此外,部分参与方可能为私利而相互串通,共享本应保密的数据,从而破坏多方学习的公平性和隐私保护需求。为解决上述问题,文章提出面向分布式学习的多密钥同态加密与差分隐私融合方案,即PrivMPL方案,其核心目标是在确保数据隐私安全的前提下,实现高效的模型训练。在该方案中,本地客户端使用聚合公钥加密更新后的模型参数,解密过程需要所有数据使用者协同完成。服务器通过对聚合参数添加高斯噪声实现差分隐私。该方案有效防止多方训练过程中因共享信息导致的隐私泄露,并且对数据使用者与服务器之间的共谋具有鲁棒性。为验证PrivMPL方案的有效性,将PrivMPL方案与基于Paillier的同态加密多方学习方案进行对比,以模型准确率作为评估指标。实验结果表明,PrivMPL方案在模型准确率方面有显著提升,进一步证明了该方案在数据隐私保护和模型性能等方面的优势。

中图分类号:

引用本文

王腾, 樊坤渭, 张瑶. 面向分布式学习的多密钥同态加密与差分隐私融合方案[J]. 信息网络安全, 2026, 26(2): 236-250.

WANG Teng, FAN Kunwei, ZHANG Yao. A Fusion Scheme of Multi-Key Homomorphic Encryption and Differential Privacy for Distributed Learning[J]. Netinfo Security, 2026, 26(2): 236-250.

| [1] | RIBEIRO M, GROLINGER K, CAPRETZ M A M. MLaaS: Machine Learning as a Service[C]// IEEE. 2015 IEEE 14th International Conference on Machine Learning and Applications (ICMLA). New York: IEEE, 2015: 896-902. |

| [2] | CARLINI N, CHIEN S, NASR M, et al. Membership Inference Attacks from First Principles[C]// IEEE. 2022 IEEE Symposium on Security and Privacy (SP). New York: IEEE, 2022: 50-61. |

| [3] | FREDRIKSON M, JHA S, RISTENPART T. Model Inversion Attacks that Exploit Confidence Information and Basic Countermeasures[C]// ACM.The 22nd ACM SIGSAC Conference on Computer and Communications Security. New York: ACM, 2015: 45-57. |

| [4] | DWORK C. Differential Privacy: A Survey of Results[C]// Springer.International Conference on Theory and Applications of Models of Computation. Heidelberg: Springer, 2008: 1-19. |

| [5] | SUN Yifan, ZHANG Rui, TAO Yang, et al. A Survey on Local Differential Privacy[J]. Frontiers of Data and Computing, 2023, 5(5): 74-97. |

| 孙一帆, 张锐, 陶杨, 等. 本地化差分隐私综述[J]. 数据与计算发展前沿, 2023, 5(5): 74-97. | |

| [6] | ABADI M, CHU A, GOODFELLOW I, et al. Deep Learning with Differential Privacy[C]// ACM. The 2016 ACM SIGSAC Conference on Computer and Communications Security. New York: ACM, 2016: 38-49. |

| [7] | RUAN Wenqiang, XU Mingxin, FANG Wenjing, et al. Private, Efficient, and Accurate: Protecting Models Trained by Multi-Party Learning with Differential Privacy[C]// IEEE. 2023 IEEE Symposium on Security and Privacy (SP). New York: IEEE, 2023: 1926-1943. |

| [8] | FU Jie, YE Qingqing, HU Haibo, et al. DPSUR: Accelerating Differentially Private Stochastic Gradient Descent Using Selective Update and Release[J]. The VLDB Endowment, 2024(7): 1200-1213. |

| [9] | ZHANG Jianqing, HUA Yang, WANG Hao, et al. Fedala: Adaptive Local Aggregation for Personalized Federated Learning[C]// AAAI. The AAAI Conference on Artificial Intelligence. Washington, D. C.: AAAI, 2023, 37(9): 11237-11244. |

| [10] | WANG Zhenya, CHENG Xiang, SU Sen, et al. Atlas: Gan-Based Differentially Private Multi-Party Data Sharing[J]. IEEE Transactions on Big Data, 2023, 9(4): 1225-1237. |

| [11] | XU Depeng, YUAN Shuhan, WU Xintao. Achieving Differential Privacy in Vertically Partitioned Multiparty Learning[C]// IEEE. 2021 IEEE International Conference on Big Data. New York: IEEE, 2021: 5474-5483. |

| [12] | WEN Jinming, LIU Qing, CHEN Jie, et al. Research Current Status and Challenges of Fully Homomorphic Cryptography Based on Learning with Errors[J]. Netinfo Security, 2024, 24(9): 1328-1351. |

| 温金明, 刘庆, 陈洁, 等. 基于错误学习的全同态加密技术研究现状与挑战[J]. 信息网络安全, 2024, 24(9): 1328-1351. | |

| [13] | NAEHRIG M, LAUTER K, VAIKUNTANATHAN V. Can Homomorphic Encryption Be Practical?[C]// ACM. The 3rd ACM Workshop on Cloud Computing Security Workshop. New York: ACM, 2011: 113-124. |

| [14] |

MARCOLLA C, SUCASAS V, MANZANO M, et al. Survey on Fully Homomorphic Encryption, Theory, and Applications[J]. Proceedings of the IEEE, 2022, 110(10): 1572-1609.

doi: 10.1109/JPROC.2022.3205665 URL |

| [15] | TAKABI H, HESAMIFARD E, GHASEMI M. Privacy Preserving Multi-Party Machine Learning with Homomorphic Encryption[C]// NIPS. The 29th Annual Conference on Neural Information Processing Systems (NIPS). Vancouver: NIPS, 2016: 1-4. |

| [16] |

FANG Haokun, QIAN Quan. Privacy Preserving Machine Learning with Homomorphic Encryption and Federated Learning[J]. Future Internet, 2021, 13(4): 94-109.

doi: 10.3390/fi13040094 URL |

| [17] | WANG Sen, CHANG J M. Differentially Private Principal Component Analysis over Horizontally Partitioned Data[C]// IEEE. 2018 IEEE Conference on Dependable and Secure Computing (DSC). New York: IEEE, 2018: 1-8. |

| [18] | LOPEZ-ALT A, TROMER E, VAIKUNTANATHAN V. On-The-Fly Multiparty Computation on the Cloud via Multikey Fully Homomorphic Encryption[C]// ACM. The Forty-Fourth Annual ACM Symposium on Theory of Computing. New York: ACM, 2012: 1219-1234. |

| [19] | CLEAR M, MCGOLDRICK C. Multi-Identity and Multi-Key Leveled FHE from Learning with Errors[C]// Springer. Annual Cryptology Conference. Heidelberg: Springer, 2015: 630-656. |

| [20] |

CAI Yuxuan, DING Wenxiu, XIAO Yuxuan, et al. Secfed: A Secure and Efficient Federated Learning Based on Multi-Key Homomorphic Encryption[J]. IEEE Transactions on Dependable and Secure Computing, 2023, 21(4): 3817-3833.

doi: 10.1109/TDSC.2023.3336977 URL |

| [21] |

LI Yanling, LAI Junzuo, ZHANG Rong, et al. Secure and Efficient Multi-Key Aggregation for Federated Learning[J]. Information Sciences, 2024, 654: 119830-119856.

doi: 10.1016/j.ins.2023.119830 URL |

| [22] |

MA Jing, NAAS S A, SIGG S, et al. Privacy-Preserving Federated Learning Based on Multi-Key Homomorphic Encryption[J]. International Journal of Intelligent Systems, 2022, 37(9): 5880-5901.

doi: 10.1002/int.v37.9 URL |

| [23] |

JIA Bin, ZHANG Xiaosong, LIU Jiewen, et al. Blockchain-Enabled Federated Learning Data Protection Aggregation Scheme with Differential Privacy and Homomorphic Encryption in IIoT[J]. IEEE Transactions on Industrial Informatics, 2021, 18(6): 4049-4058.

doi: 10.1109/TII.2021.3085960 URL |

| [24] | LI Yujie, SUN Yi, LIN Wei. Efficient End to End Privacy Preserving Federated Learning Scheme[J]. Journal of Chinese Computer Systems, 2025, 46(8): 1818-1828. |

| 李宇杰, 孙奕, 林玮. 一种高效的全流程隐私保护联邦学习方案[J]. 小型微型计算机系统, 2025, 46(8): 1818-1828. | |

| [25] | JAYARAMAN B, WANG Lingxiao, EVANS D, et al. Distributed Learning without Distress: Privacy-Preserving Empirical Risk Minimization[J]. Advances in Neural Information Processing Systems, 2018(6), 31-48. |

| [26] | MCMAHAN H B, RAMAGE D, TALWAR K, et al. Learning Differentially Private Recurrent Language Models[C]// ICLR. International Conference on Learning Representations. Vancouver: ICLR, 2018: 1-14. |

| [27] | TRUEX S, LIU Ling, CHOW K H, et al. LDP-Fed: Federated Learning with Local Differential Privacy[C]// ACM. The Third ACM International Workshop on Edge Systems, Analytics and Networking. New York: ACM, 2020: 61-66. |

| [28] | BOHLER J, KERSCHBAUM F. Secure Multi-Party Computation of Differentially Private Heavy Hitters[C]// ACM. The 2021 ACM SIGSAC Conference on Computer and Communications Security. New York: ACM, 2021: 2361-2377. |

| [29] | CHEN Hao, CHILLOTTI I, SONG Y. Multi-Key Homomorphic Encryption from TFHE[C]// Springer. International Conference on the Theory and Application of Cryptology and Information Security. Heidelberg: Springer, 2019: 446-472. |

| [30] | BRAKERSKI Z. Fully Homomorphic Encryption without Modulus Switching from Classical GapSVP[C]// Springer. Annual Cryptology Conference. Heidelberg: Springer, 2012: 868-886. |

| [31] | FAN Junfeng, VERCAUTEREN F. Somewhat Practical Fully Homomorphic Encryption[EB/OL]. (2012-03-22)[2025-04-02]. https://eprint.iacr.org/2012/144. |

| [32] | CHEON J H, KIM A, KIM M, et al. Homomorphic Encryption for Arithmetic of Approximate Numbers[C]// Springer. International Conference on the Theory and Application of Cryptology and Information Security. Heidelberg: Springer, 2017: 409-437. |

| [33] | SUN Han, ZHANG Yan, XU Zhen, et al. MK-FLFHNN: A Privacy-Preserving Vertical Federated Learning Framework for Heterogeneous Neural Network via Multi-Key Homomorphic Encryption[C]// IEEE. The 26th International Conference on Computer Supported Cooperative Work in Design (CSCWD). New York: IEEE, 2023: 552-558. |

| [34] |

LI Ping, LI Jin, HUANG Zhengan, et al. Multi-Key Privacy-Preserving Deep Learning in Cloud Computing[J]. Future Generation Computer Systems, 2017, 74: 76-85.

doi: 10.1016/j.future.2017.02.006 URL |

| [1] | 施寅生, 包阳, 庞晶晶. 一种对抗GAN攻击的联邦隐私增强方法研究[J]. 信息网络安全, 2026, 26(1): 49-58. |

| [2] | 闫宇坤, 唐朋, 陈睿, 都若尘, 韩启龙. 面向数据投毒后门攻击的随机性增强双层优化防御方法[J]. 信息网络安全, 2025, 25(7): 1074-1091. |

| [3] | 赵锋, 范淞, 赵艳琦, 陈谦. 基于本地差分隐私的可穿戴医疗设备流数据隐私保护方法[J]. 信息网络安全, 2025, 25(5): 700-712. |

| [4] | 李骁, 宋晓, 李勇. 基于知识蒸馏的医疗诊断差分隐私方法研究[J]. 信息网络安全, 2025, 25(4): 524-535. |

| [5] | 徐茹枝, 仝雨蒙, 戴理朋. 基于异构数据的联邦学习自适应差分隐私方法研究[J]. 信息网络安全, 2025, 25(1): 63-77. |

| [6] | 张洋, 魏荣, 尤启迪, 蒋小彤. 基于多密钥同态加密的电子投票协议[J]. 信息网络安全, 2025, 25(1): 88-97. |

| [7] | 尹春勇, 贾续康. 基于策略图的三维位置隐私发布算法研究[J]. 信息网络安全, 2024, 24(4): 602-613. |

| [8] | 徐茹枝, 戴理朋, 夏迪娅, 杨鑫. 基于联邦学习的中心化差分隐私保护算法研究[J]. 信息网络安全, 2024, 24(1): 69-79. |

| [9] | 尹春勇, 蒋奕阳. 基于个性化时空聚类的差分隐私轨迹保护模型[J]. 信息网络安全, 2024, 24(1): 80-92. |

| [10] | 刘刚, 杨雯莉, 王同礼, 李阳. 基于云联邦的差分隐私保护动态推荐模型[J]. 信息网络安全, 2023, 23(7): 31-43. |

| [11] | 陈晶, 彭长根, 谭伟杰, 许德权. 基于差分隐私和秘密共享的多服务器联邦学习方案[J]. 信息网络安全, 2023, 23(7): 98-110. |

| [12] | 赵佳, 高塔, 张建成. 基于改进贝叶斯网络的高维数据本地差分隐私方法[J]. 信息网络安全, 2023, 23(2): 19-25. |

| [13] | 刘峰, 杨成意, 於欣澄, 齐佳音. 面向去中心化双重差分隐私的谱图卷积神经网络[J]. 信息网络安全, 2022, 22(2): 39-46. |

| [14] | 晏燕, 张雄, 冯涛. 大数据统计划分发布的等比差分隐私预算分配方法[J]. 信息网络安全, 2022, 22(11): 24-35. |

| [15] | 路宏琳, 王利明. 面向用户的支持用户掉线的联邦学习数据隐私保护方法[J]. 信息网络安全, 2021, 21(3): 64-71. |

| 阅读次数 | ||||||

|

全文 |

|

|||||

|

摘要 |

|

|||||