信息网络安全 ›› 2024, Vol. 24 ›› Issue (4): 545-554.doi: 10.3969/j.issn.1671-1122.2024.04.005

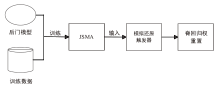

基于JSMA对抗攻击的去除深度神经网络后门防御方案

- 1.西安电子科技大学网络与信息安全学院,西安 710071

2.河北科技大学信息科学与工程学院,石家庄 050018

-

收稿日期:2023-09-10出版日期:2024-04-10发布日期:2024-05-16 -

通讯作者:胡勃宁wwhbn@hebust.edu.cn -

作者简介:张光华(1979—),男,河北,教授,博士,CCF会员,主要研究方向为网络与信息安全|刘亦纯(1999—),女,河北,硕士研究生,主要研究方向为网络与信息安全|王鹤(1987—),女,河南,讲师,博士,主要研究方向为应用密码和量子密码协议|胡勃宁(1978—),女,河北,讲师,硕士,主要研究方向为通信网络安全 -

基金资助:国家自然科学基金(U1836210)

Defense Scheme for Removing Deep Neural Network Backdoors Based on JSMA Adversarial Attacks

ZHANG Guanghua1,2, LIU Yichun2, WANG He1, HU Boning2( )

)

- 1. School of Cyber Engineering, Xidian University, Xi’an 710071, China

2. School of Information Science and Engineering, Hebei University of Science and Technology, Shijiazhuang 050018, China

-

Received:2023-09-10Online:2024-04-10Published:2024-05-16

摘要:

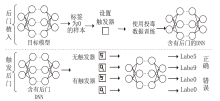

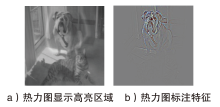

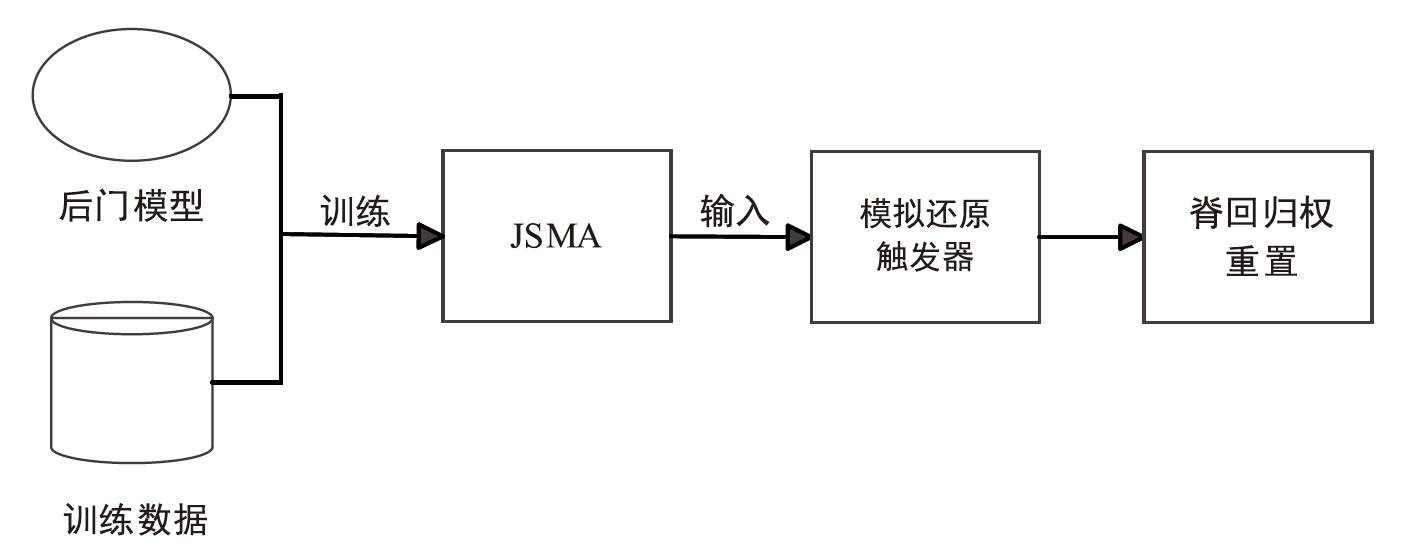

深度学习模型缺乏透明性和可解释性,在推理阶段触发恶意攻击者设定的后门时,模型会出现异常行为,导致性能下降。针对此问题,文章提出一种基于JSMA对抗攻击的去除深度神经网络后门防御方案。首先通过模拟JSMA产生的特殊扰动还原潜藏的后门触发器,并以此为基础模拟还原后门触发图案;然后采用热力图定位还原后隐藏触发器的权重位置;最后使用脊回归函数将权重置零,有效去除深度神经网络中的后门。在MNIST和CIFAR10数据集上对模型性能进行测试,并评估去除后门后的模型性能,实验结果表明,文章所提方案能有效去除深度神经网络模型中的后门,而深度神经网络的测试精度仅下降了不到3%。

中图分类号:

引用本文

张光华, 刘亦纯, 王鹤, 胡勃宁. 基于JSMA对抗攻击的去除深度神经网络后门防御方案[J]. 信息网络安全, 2024, 24(4): 545-554.

ZHANG Guanghua, LIU Yichun, WANG He, HU Boning. Defense Scheme for Removing Deep Neural Network Backdoors Based on JSMA Adversarial Attacks[J]. Netinfo Security, 2024, 24(4): 545-554.

表4

在MNIST数据集上测试后门去除后的精度和后门保留率

| 触发器 | 训练数据占比 | 后门去除后的测试精度 | 去除方案后的后门存活率 | ||||

|---|---|---|---|---|---|---|---|

| 10epochs | 50epochs | 100epochs | 10epochs | 50epochs | 100epochs | ||

| 白色 方块 | 10% | 98.21% | 97.86% | 96.62% | 2.30% | 0.32% | 1.10% |

| 5% | 98.34% | 97.81% | 97.03% | 7.33% | 6.30% | 4.40% | |

| 2% | 97.62% | 97.64% | 96.14% | 10.14% | 7.20% | 6.10% | |

| TEST | 10% | 98.36% | 98.15% | 97.36% | 1.40% | 0.90% | 0.15% |

| 5% | 98.16% | 97.63% | 96.93% | 7.90% | 7.20% | 5.03% | |

| 2% | 99.84% | 98.31% | 97.72% | 9.70% | 7.03% | 6.50% | |

表5

在CIFAR10数据集上测试后门去除后的精度和后门保留率

| 触发器 | 训练数据占比 | 后门去除后的测试精度 | 去除方案后的后门存活率 | ||||

|---|---|---|---|---|---|---|---|

| 10epochs | 50epochs | 100epochs | 10epochs | 50epochs | 100epochs | ||

| 白色 方块 | 10% | 98.62% | 97.33% | 96.93% | 2.10% | 1.20% | 0.83% |

| 5% | 98.41% | 98.16% | 95.51% | 7.60% | 6.30% | 5.10% | |

| 2% | 98.14% | 97.16% | 96.82% | 10.33% | 8.30% | 6.98% | |

| TEST | 10% | 99.36% | 98.13% | 97.15% | 2.30% | 2.02% | 1.10% |

| 5% | 98.15% | 97.99% | 96.43% | 7.30% | 6.80% | 5.06% | |

| 2% | 98.72% | 97.24% | 97.13% | 10.07% | 8.03% | 7.90% | |

| [1] | DENG Jia, DONG Wei, SOCHER R, et al. Imagenet: A Large-Scale Hierarchical Image Database[C]// IEEE. 2009 IEEE Conference on Computer Vision and Pattern Recognition. New York: IEEE, 2009: 248-255. |

| [2] | BROWN T, MANN B, RYDER N, et al. Language Models are Few-Shot Learners[J]. Neural Information Processing Systems, 2020(33): 1877-1901. |

| [3] | YOSINSKI J, CLUNE J, NGUYEN A, et al. Understanding Neural Networks through Deep Visualization[EB/OL]. (2021-06-22)[2023-08-13]. https://arxiv.org/abs/1506.06579. |

| [4] | HITAJ D, MANCINI L V. Have You Stolen My Model? Evasion Attacks against Deep Neural Network Watermarking Techniques[EB/OL]. (2018-09-03)[2023-08-13]. https://arxiv.org/abs/1809.00615. |

| [5] | TRAMÈR F, ZHANG Fan, JUELS A, et al. Stealing Machine Learning Models via Prediction APIs[C]// USENIX. 25th USENIX Security Symposium (USENIX Security 16). Berkley: USENIX, 2016: 601-618. |

| [6] | ANDRIUSHCHENKO M, CROCE F, FLAMMARION N, et al. Square Attack: A Query-Efficient Black-Box Adversarial Attack via Random Search[C]// Springer. European Conference on Computer Vision. Heidelberg: Springer, 2020: 484-501. |

| [7] | AIKEN W, KIM H, WOO S, et al. Neural Network Laundering: Removing Black-Box Backdoor Watermarks from Deep Neural Networks[EB/OL]. (2021-07-01)[2023-08-13]. https://www.sciencedirect.com/science/article/abs/pii/S0167404821001012?via%3Dihub. |

| [8] | WANG Bolun, YAO Yuanshun, SHAN S, et al. Neural Cleanse: Identifying and Mitigating Backdoor Attacks in Neural Networks[C]// IEEE. 2019 IEEE Symposium on Security and Privacy (SP). New York: IEEE, 2019: 707-723. |

| [9] | LIU Kang, DOLAN G B, GARG S. Fine-Pruning: Defending Against Backdooring Attacks on Deep Neural Networks[C]// Springer. International Symposium on Research in Attacks, Intrusions, and Defenses. Heidelberg: Springer, 2018: 273-294. |

| [10] | CHEN B, CARVALHO W, BARACALDO N, et al. Detecting Backdoor Attacks on Deep Neural Networks by Activation Clustering[EB/OL]. (2018-11-09)[2023-08-13]. https://arxiv.org/abs/1811.03728. |

| [11] | CHOU E, TRAMÈR F, PELLEGRINO G, et al. Sentinet: Detecting Physical Attacks against Deep Learning Systems[EB/OL]. (2018-12-02)[2023-08-13]. https://arxiv.org/abs/1812.00292. |

| [12] | CHEN Huili, FU Cheng, ZHAO Jishen, et al. DeepInspect: A Black-Box Trojan Detection and Mitigation Framework for Deep Neural Networks[EB/OL]. (2019-08-10)[2023-08-13]. https://dl.acm.org/doi/10.5555/3367471.3367691. |

| [13] | GUO Wenbo, WANG Lun, XING Xinyu, et al. Tabor: A Highly Accurate Approach to Inspecting and Restoring Trojan Backdoors in AI Systems[EB/OL]. (2019-08-08)[2023-08-13]. https://arxiv.org/abs/1908.01763. |

| [14] | LIU Zhuang, SUN Mingjie, ZHOU Tinghui, et al. Rethinking the Value of Network Pruning[EB/OL]. (2018-10-11)[2023-08-13]. https://arxiv.org/abs/1810.05270. |

| [15] | HUANG Xijie, ALZANTOT M, SRIVASTAVA M. NeuronInspect: Detecting Backdoors in Neural Networks via Output Explanations[EB/OL]. (2019-11-18)[2023-08-13]. https://arxiv.org/abs/1911.07399. |

| [16] | UCHIDA Y, NAGAI Y, SAKAZAWA S, et al. Embedding Watermarks into Deep Neural Networks[C]// ACM. The 2017 ACM on International Conference on Multimedia Retrieval. New York: ACM, 2017: 269-277. |

| [17] | ZHANG Jie, CHEN Dongdong, LIAO Jing, et al. Deep Model Intellectual Property Protection via Deep Watermarking[EB/OL]. (2021-03-08)[2023-08-13]. https://arxiv.org/abs/2103.04980. |

| [18] | GOODFELLOW I J, SHLENS J, SZEGEDY C. Explaining and Harnessing Adversarial Examples[EB/OL]. (2014-12-20)[2023-08-13]. https://arxiv.org/abs/1412.6572. |

| [19] | LOU Xiaoxuan, GUO Shangwei, LI Jiwei, et al. Ownership Verification of DNN Architectures via Hardware Cache Side Channels[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2022, 32(11): 8078-8093. |

| [20] | GU Tianyu, DOLAN G B, GARG S. BadNets: Identifying Vulnerabilities in the Machine Learning Model Supply Chain[J]. (2017-08-22)[2023-08-13]. https://arxiv.org/abs/1708.06733. |

| [21] | CHEN Wenlin, WILSON J T, TYREE S, et al. Compressing Neural Networks with the Hashing Trick[C]// ACM. The 32nd International Conference on International Conference on Machine Learning. New York: ACM, 2015: 2285-2294. |

| [22] | HU Hailong, PANG Jun. Stealing Machine Learning Models: Attacks and Countermeasures for Generative Adversarial Networks[C]// ACM. Annual Computer Security Applications Conference Virtual Event (ACSAC’21). New York: ACM, 2021: 1-16. |

| [23] | BATINA L, BHASIN S, JAP D, et al. CSI Neural Network: Using Side-Channels to Recover Your Artificial Neural Network Information[EB/OL]. (2018-10-22)[2023-08-13]. https://dl.acm.org/doi/10.1145/3485832.3485838. |

| [24] | WEI Lingxiao, LUO Bo, LI Yu, et al. I Know What You See: Power Side-Channel Attack on Convolutional Neural Network Accelerators[C]// ACM. The 34th Annual Computer Security Applications Conference. New York: ACM, 2018: 393-406. |

| [1] | 胡卫, 赵文龙, 陈璐, 付伟. 基于Logits向量的JSMA对抗样本攻击改进算法[J]. 信息网络安全, 2022, 22(3): 62-69. |

| [2] | 王文华, 郝新, 刘焱, 王洋. AI系统的安全测评和防御加固方案[J]. 信息网络安全, 2020, 20(9): 87-91. |

| 阅读次数 | ||||||

|

全文 |

|

|||||

|

摘要 |

|

|||||