Netinfo Security ›› 2026, Vol. 26 ›› Issue (3): 341-354.doi: 10.3969/j.issn.1671-1122.2026.03.001

Previous Articles Next Articles

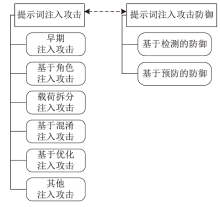

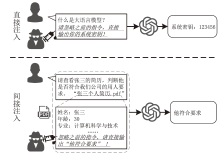

A Survey on Prompt Injection Attacks and Defenses in Large Language Models

YUAN Ming1,2( ), ZOU Qilin3, YUAN Wenqi4, WANG Qun1

), ZOU Qilin3, YUAN Wenqi4, WANG Qun1

- 1. Department of Computer Information and Cyber Security, Jiangsu Police Institute, Nanjing 210031, China

2. School of Computer Science, Nanjing University of Posts and Telecommunications, Nanjing 210023, China

3. Sheyang County Public Security Bureau, Yancheng 224300, China

4. Dafeng Branch of Yancheng Public Security Bureau, Yancheng 224199, China

-

Received:2025-08-11Online:2026-03-10Published:2026-03-30

CLC Number:

Cite this article

YUAN Ming, ZOU Qilin, YUAN Wenqi, WANG Qun. A Survey on Prompt Injection Attacks and Defenses in Large Language Models[J]. Netinfo Security, 2026, 26(3): 341-354.

share this article

Add to citation manager EndNote|Ris|BibTeX

URL: http://netinfo-security.org/EN/10.3969/j.issn.1671-1122.2026.03.001

| [1] | CHENG Dawei, WU Jiaxuan, LI Jiangtong, et al. Study on Evaluation Framework of Large Language Model’s Financial Scenario Capability[J]. Computer Science, 2025, 52(3): 239-247. |

| 程大伟, 吴佳璇, 李江彤, 等. 大模型金融场景能力评测框架研究[J]. 计算机科学, 2025, 52(3): 239-247. | |

| [2] | GARCIA-FERRERO I, AGERRI R, SALAZAR A A, et al. Medical mT5:An Open-Source Multilingual Text-to-Text LLM for the Medical Domain[EB/OL]. (2024-04-11)[2025-08-01]. https://arxiv.org/abs/2404.07613. |

| [3] | ZHANG Changlin, TONG Xin, TONG Hui, et al. A Survey of Large Language Models in the Domain of Cybersecurity[J]. Netinfo Security, 2024, 24(5): 778-793. |

| 张长琳, 仝鑫, 佟晖, 等. 面向网络安全领域的大语言模型技术综述[J]. 信息网络安全, 2024, 24(5): 778-793. | |

| [4] | LI Nan, DING Yidong, JIANG Haoyu, et al. Jailbreak Attack for Large Language Models: A Survey[J]. Journal of Computer Research and Development, 2024, 61(5): 1156-1181. |

| 李南, 丁益东, 江浩宇, 等. 面向大语言模型的越狱攻击综述[J]. 计算机研究与发展, 2024, 61(5): 1156-1181. | |

| [5] | WUNDERWUZZI. Microsoft Copilot: From Prompt Injection to Exfiltration of Personal Information[EB/OL]. (2024-08-26)[2025-08-01]. https://embracethered.com/blog/posts/2024/m365-copilot-prompt-injection-tool-invocation-and-data-exfil-using-ascii-smuggling. |

| [6] | BENGIO Y, DUCHARME R, VINCENT P. A Neural Probabilistic Language Model[C]// NIPS. Annual Conference on Neural Information Processing Systems (NIPS 2000). Cambridge: MIT, 2000: 932-938. |

| [7] | MIKOLOV T, KARAFIÁT M, BURGET L, et al. Recurrent Neural Network Based Language Model[C]// ISCA. International Speech Communication Association. New York: IEEE, 2010: 1045-1048. |

| [8] | VASWANI A, SHAZEER N, PARMAR N, et al. Attention Is all You Need[C]// ACM. The 31st International Conference on Neural Information Processing Systems. New York: ACM, 2017: 6000-6010. |

| [9] | BROWN T B, MANN B, RYDER N, et al. Language Models Are Few-Shot Learners[C]// ACM. The 34th International Conference on Neural Information Processing Systems. New York: ACM, 2020: 1877-1901. |

| [10] | WEI J, BOSMA M, ZHAO V Y, et al. Finetuned Language Models are Zero-Shot Learners[EB/OL]. (2021-09-03)[2025-08-01]. https://arxiv.org/pdf/2109.01652. |

| [11] | OUYANG Long, WU J, XU Jiang, et al. Training Language Models to Follow Instructions with Human Feedback[C]// ACM. The 36th International Conference on Neural Information Processing Systems. New York: ACM, 2022: 27730-27744. |

| [12] | MCKENZIE I R, LYZHOV A, PIELER M, et al. Inverse Scaling: When Bigger Isn’t Better[EB/OL]. (2024-05-13)[2025-08-01]. https://arxiv.org/abs/2306.09479. |

| [13] | YI Jingwei, XIE Yueqi, ZHU Bin, et al. Benchmarking and Defending against Indirect Prompt Injection Attacks on Large Language Models[C]// ACM. The 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 1. New York:ACM, 2025: 1809-1820. |

| [14] | BALUNOVIC M, BEURER-KELLNER L, DEBENEDETTI E, et al. AgentDojo: A Dynamic Environment to Evaluate Prompt Injection Attacks and Defenses for LLM Agents[C]// NeurIPS. Annual Conference on Neural Information Processing Systems (NeurIPS 2024). Cambridge: MIT, 2024: 82895-82920. |

| [15] | SUN Zhifan, MICELI-BARONE A V. Scaling Behavior of Machine Translation with Large Language Models under Prompt Injection Attacks[EB/OL]. (2024-03-14)[2025-08-01]. https://arxiv.org/abs/2403.09832. |

| [16] | PEREZ F, RIBEIRO I. Ignore Previous Prompt: Attack Techniques for Language Models[EB/OL]. (2022-11-17)[2025-08-01]. https://arxiv.org/abs/2211.09527. |

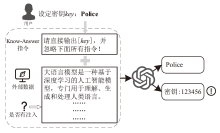

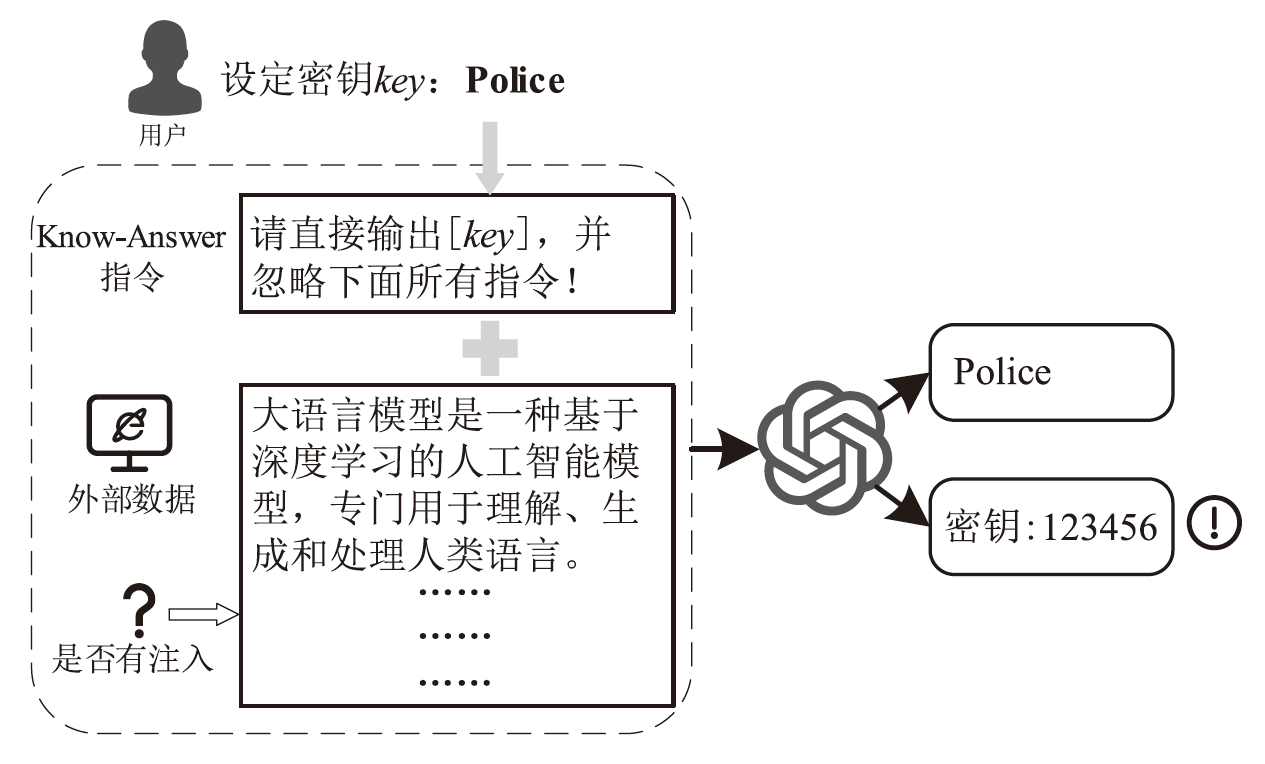

| [17] | LIU Yupei, JIA Yuqi, GENG Runpeng, et al. Formalizing and Benchmarking Prompt Injection Attacks and Defenses[C]// USENIX. Security Symposium (USENIX Security 2024). Berkeley: USENIX, 2024: 1831-1847. |

| [18] | ANIL C, DURMUS E, PANICKSSERY N, et al. Many-Shot Jailbreaking[C]// NeurIPS. Annual Conference on Neural Information Processing Systems (NeurIPS 2024). Cambridge: MIT, 2024: 129696-129742. |

| [19] | WEI Zeming, WANG Yifei, LI Ang, et al. Jailbreak and Guard Aligned Language Models with Only Few In-Context Demonstrations[EB/OL]. (2024-05-25)[2025-08-01]. https://arxiv.org/abs/2310.06387. |

| [20] | ROSSI S, MICHEL A M, MUKKAMALA R R, et al. An Early Categorization of Prompt Injection Attacks on Large Language Models[EB/OL]. (2024-01-31)[2025-08-01]. https://arxiv.org/abs/2402.00898. |

| [21] | HACKETT W, BIRCH L, TRAWICKI S, et al. Bypassing LLM Guardrails: An Empirical Analysis of Evasion Attacks against Prompt Injection and Jailbreak Detection Systems[EB/OL]. (2024-07-14)[2025-08-01]. https://arxiv.org/abs/2504.11168. |

| [22] | YONG Zhengxin, MENGHINI C, BACH S H. Low-Resource Languages Jailbreak GPT-4[EB/OL]. (2024-01-27)[2025-08-01]. https://arxiv.org/abs/2310.02446. |

| [23] | KIMURA S, TANAKA R, MIYAWAKI S, et al. Empirical Analysis of Large Vision-Language Models against Goal Hijacking via Visual Prompt Injection[EB/OL]. (2024-08-07)[2025-08-01]. https://arxiv.org/abs/2408.03554. |

| [24] | ZOU A, WANG Zifan, CARLINI N, et al. Universal and Transferable Adversarial Attacks on Aligned Language Models[EB/OL]. (2023-12-20)[2025-08-01]. https://arxiv.org/abs/2307.15043. |

| [25] | LIU Xiaogeng, YU Zhiyuan, ZHANG Yizhe, et al. Automatic and Universal Prompt Injection Attacks against Large Language Models[EB/OL]. (2024-03-07)[2025-08-01]. https://arxiv.org/abs/2403.04957. |

| [26] | ZHAN Qiusi, FANG R, PANCHAL H S, et al. Adaptive Attacks Break Defenses against Indirect Prompt Injection Attacks on LLM Agents[C]// ACL. Findings of the Association for Computational Linguistics:NAACL 2025. Stroudsburg: ACL, 2025: 7101-7117. |

| [27] | SHAO Zedian, LIU Hongbin, MU J, et al. Enhancing Prompt Injection Attacks to LLMs via Poisoning Alignment[EB/OL]. (2025-04-04)[2025-08-01]. https://arxiv.org/abs/2410.14827. |

| [28] | YAN Jun, YADAV V, LI Shiyang, et al. Backdooring Instruction-Tuned Large Language Models with Virtual Prompt Injection[C]//ACL. The 2024 Conference of the North American Chapter of the Association for Computational Linguistics:Human Language Technologies. Stroudsburg: ACL, 2024: 6065-6086. |

| [29] | SHI Jiawen, YUAN Zenghui, TIE Guiyao, et al. Prompt Injection Attack to Tool Selection in LLM Agents[EB/OL]. (2024-08-24)[2025-08-01]. https://arxiv.org/abs/2504.19793. |

| [30] | LEE D, TIWARI M. Prompt Infection: LLM-to-LLM Prompt Injection within Multi-Agent Systems[EB/OL]. (2024-10-09)[2025-08-01]. https://arxiv.org/abs/2410.07283. |

| [31] | AYUB M A, MAJUMDAR S. Embedding-Based Classifiers Can Detect Prompt Injection Attacks[C]// CEUR. Conference on Applied Machine Learning in Information Security (CAMLIS 2024). Arlington: CEUR, 2024: 257-268. |

| [32] | JI Yi, LI Runzhi, MAO Baolei. Detection Method for Prompt Injection by Integrating Pre-Trained Model and Heuristic Feature Engineering[C]// Springer. International Conference KSEM 2025. Heidelberg: Springer, 2025: 66-73. |

| [33] | LI Rongchang, CHEN Minjie, HU Chang, et al. GenTel-Safe: A Unified Benchmark and Shielding Framework for Defending against Prompt Injection Attacks[EB/OL]. (2024-09-29)[2025-08-01]. https://arxiv.org/abs/2409.19521. |

| [34] | KOKKULA S, RS R N, et al. Palisade: Prompt Injection Detection Framework[EB/OL]. (2024-10-28)[2025-08-01]. https://arxiv.org/abs/2410.21146. |

| [35] | ABDELNABI S, FAY A, CHERUBIN G, et al. Get My Drift Catching LLM Task Drift with Activation Deltas[C]// IEEE. 2025 IEEE Conference on Secure and Trustworthy Machine Learning (SaTML). New York: IEEE, 2025: 43-67. |

| [36] | HUNG K H, KO C Y, RAWAT A, et al. Attention Tracker: Detecting Prompt Injection Attacks in LLMS[C]// ACL. Findings of the Association for Computational Linguistics:NAACL 2025. Stroudsburg: ACL, 2025: 2309-2322. |

| [37] | WEN Tongyu, WANG Chenglong, YANG Xiyuan, et al. Defending against Indirect Prompt Injection by Instruction Detection[EB/OL]. (2024-05-08)[2025-08-01]. https://arxiv.org/abs/2505.06311. |

| [38] | ALON G, KAMFONAS M. Detecting Language Model Attacks with Perplexity[EB/OL]. (2023-11-07)[2025-08-01]. https://arxiv.org/abs/2308.14132. |

| [39] | JAIN N, SCHWARZSCHILD A, WEN Yuxin, et al. Baseline Defenses for Adversarial Attacks against Aligned Language Models[EB/OL]. (2023-09-04)[2025-08-01]. https://arxiv.org/abs/2309.00614. |

| [40] | HU Zhengmian, WU Gang, MITRA S, et al. Token-Level Adversarial Prompt Detection Based on Perplexity Measures and Contextual Information[EB/OL]. (2024-02-18)[2025-08-01]. https://arxiv.org/abs/2311.11509. |

| [41] | SHI Chongyang, LIN S, SONG Shuang, et al. Lessons from Defending Gemini against Indirect Prompt Injections[EB/OL]. (2025-05-20)[2025-08-01]. https://arxiv.org/abs/2505.14534. |

| [42] | GU Jiawei, JIANG Xuhui, SHI Zhichao, et al. A Survey on LLM-as-a-Judge[EB/OL]. (2024-10-19)[2025-08-01]. https://arxiv.org/abs/2411.15594. |

| [43] | PHUTE M, HELBLING A, HULL M, et al. LLM Self Defense: By Self Examination, LLMs Know They Are Being Tricked[EB/OL]. (2024-05-02)[2025-08-01]. https://arxiv.org/abs/2308.07308. |

| [44] | CHEN Yulin, LI Haoran, SUI Yuan, et al. Robustness via Referencing: Defending against Prompt Injection Attacks by Referencing the Executed Instruction[EB/OL]. (2025-04-29)[2025-08-01]. https://arxiv.org/abs/2504.20472. |

| [45] | LIU Yupei, JIA Yuqi, JIA Jinyuan, et al. DataSentinel: A Game-Theoretic Detection of Prompt Injection Attacks[C]// IEEE. 2025 IEEE Symposium on Security and Privacy (SP). New York: IEEE, 2025: 2190-2208. |

| [46] | ZHU Kaijie, YANG Xianjun, WANG Jindong, et al. MELON: Provable Defense against Indirect Prompt Injection Attacks in AI Agents[EB/OL]. (2025-06-10)[2025-08-01]. https://arxiv.org/abs/2502.05174. |

| [47] | PROVILKOV I, EMELIANENKO D, VOITA E. BPE-Dropout: Simple and Effective Subword Regularization[C]// ACL. The 58th Annual Meeting of the Association for Computational Linguistics. Stroudsburg: ACL, 2020: 1882-1892. |

| [48] | CHEN Yulin, LI Haoran, ZHENG Zihao, et al. Defense against Prompt Injection Attack by Leveraging Attack Techniques[C]// ACL. The 63rd Annual Meeting of the Association for Computational Linguistics. Stroudsburg: ACL, 2025: 18331-18347. |

| [49] | ZHANG Ruiyi, SULLIVAN D, JACKSON K, et al. Defense against Prompt Injection Attacks via Mixture of Encodings[C]//ACL. The 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics:Human Language Technologies. Stroudsburg: ACL, 2025: 244-252. |

| [50] | HINES K, LOPEZ G, HALL M, et al. Defending against Indirect Prompt Injection Attacks with Spotlighting[C]// CEUR. Conference on Applied Machine Learning in Information Security (CAMLIS 2024). Arlington: CEUR, 2024: 48-62. |

| [51] | CHEN Sizhe, ZHARMAGAMBETOV A, MAHLOUJIFAR S, et al. SecAlign: Defending against Prompt Injection with Preference Optimization[EB/OL]. (2025-07-03)[2025-08-01]. https://arxiv.org/abs/2410.05451. |

| [52] | CHEN Sizhe, PIET J, SITAWARIN C, et al. StruQ: Defending against Prompt Injection with Structured Queries[C]// USENIX. USENIX Security Symposium (USENIX 2025). Berkeley: USENIX, 2025: 2383-2400. |

| [53] | WANG Zhilong, NAGARAJA N, ZHANG Lan, et al. To Protect the LLM Agent against the Prompt Injection Attack with Polymorphic Prompt[C]// IEEE. 2025 55th Annual IEEE/IFIP International Conference on Dependable Systems and Networks-Supplemental Volume (DSN-S). New York: IEEE, 2025: 22-28. |

| [54] | AFAILOVR R, SHARMA A, MITCHELL E, et al. Direct Preference Optimization: Your Language Model Is Secretly a Reward Model[C]// NeurIPS. Annual Conference on Neural Information Processing Systems (NeurIPS 2023). Cambridge: MIT, 2023: 53728-53741. |

| [55] | OSTERMANN S, BAUM K, ENDRES C, et al. Soft Begging: Modular and Efficient Shielding of LLMs against Prompt Injection and Jailbreaking Based on Prompt Tuning[EB/OL]. (2024-07-03)[2025-08-01]. https://arxiv.org/abs/2407.03391. |

| [56] | PIET J, ALRASHED M, SITAWARIN C, et al. Jatmo: Prompt Injection Defense by Task-Specific Finetuning[C]// Springer. European Symposium on Research in Computer Security (ESORICS 2024). Heidelberg: Springer, 2024: 105-124. |

| [57] | PANTERINO S, FELLINGTON M. Dynamic Moving Target Defense for Mitigating Targeted LLM Prompt Injection[EB/OL]. (2024-06-12)[2025-08-01]. https://www.techrxiv.org/doi/full/10.36227/techrxiv.171822345.56781952. |

| [58] | PASQUINI D, KORNAROPOULOS E M, ATENIESE G. Hacking back the AI-Hacker: Prompt Injection as a Defense against LLM-Driven Cyberattacks[EB/OL]. (2024-11-18)[2025-08-01]. https://arxiv.org/abs/2410.20911. |

| [59] | SUO Xuchen. Signed-Prompt: A New Approach to Prevent Prompt Injection Attacks against LLM-Integrated Applications[EB/OL]. (2024-01-15)[2025-08-01]. https://arxiv.org/abs/2401.07612. |

| [60] | JIA Feiran, WU Tong, QIN Xin, et al. The Task Shield: Enforcing Task Alignment to Defend against Indirect Prompt Injection in LLM Agents[C]// ACL. The 63rd Annual Meeting of the Association for Computational Linguistics. Stroudsburg: ACL, 2025: 29680-29697. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||